Key Terms

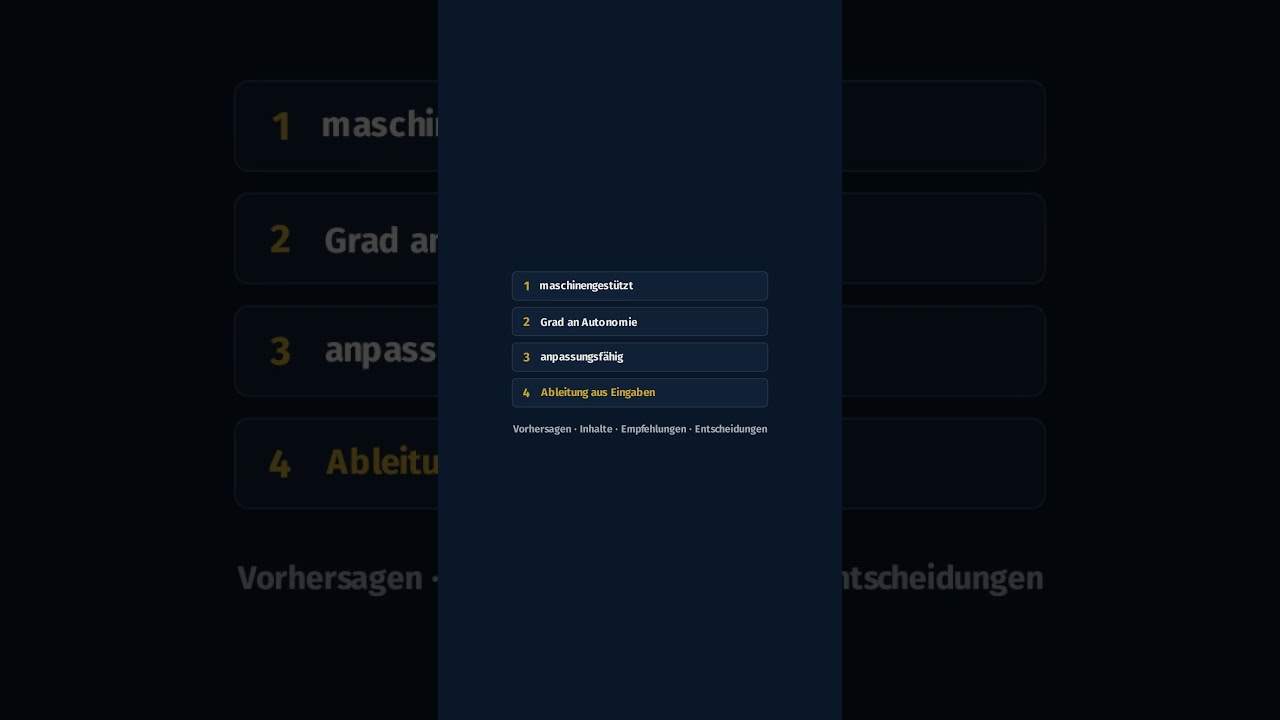

- AI system

- A machine-based system designed to operate with varying levels of autonomy, that may exhibit adaptiveness and infers from input how to generate outputs such as predictions, content, recommendations or decisions [Art. 3(1)].

- Provider

- A natural or legal person, public authority or other body that develops or has developed an AI system or GPAI model and places it on the market or puts it into service under its own name or trademark [Art. 3(3)].

- Deployer

- A natural or legal person, public authority or other body using an AI system under its authority, except where the system is used in the course of a personal non-professional activity [Art. 3(4)].

- High-risk AI system

- An AI system that is a safety component of a regulated product or falls within a critical-use area listed in Annex III and poses a significant risk to health, safety or fundamental rights [Art. 6].

- General-purpose AI model (GPAI model)

- An AI model displaying significant generality that is capable of competently performing a wide range of distinct tasks and can be integrated into a variety of downstream systems or applications [Art. 3(63)].

- Conformity assessment

- The procedure to verify that a high-risk AI system meets the requirements of Chapter III, Section 2 before it is placed on the market or put into service — either via internal control (Annex VI) or involving a notified body (Annex VII) [Art. 43].

- Substantial modification

- A change to the AI system after placing on the market that affects compliance with the Regulation or alters the intended purpose and triggers a new conformity assessment [Art. 3(23)].

Frequently Asked Questions

What qualifies as an 'AI system' under the AI Act?

When is an AI system classified as 'high-risk'?

Are open-source AI models exempt from the Regulation?

What transparency obligations apply to chatbots and deepfakes?

What happens when an existing AI system undergoes a substantial modification?

Must high-risk AI systems be registered in the EU database?

What obligations do deployers of high-risk AI systems have?

Assessment Factors & Checklist

PremiumQuestions for Your Lawyer

PremiumConclusion & Summary

PremiumDetailed analysis with source links.

Schalten Sie die KI-Analyse frei — mit markierten Fundstellen und direkten Links zu EUR-Lex. Kostenlos prüfen mit Scout.

Keine Kreditkarte. 50 Recherchen + 5 KI-Analysen frei.

In short — videos on this topic

60-second explainers from our YouTube channel. Click opens YouTube in a new tab — no YouTube embed, no tracking on this page.

0:58

0:58KI-Risikomanagement-Tool gekauft — reicht das für Art. 9 AI Act?

Opens YouTube 0:45

0:45AI Act: Warum Art. 17 ein Organisations- statt Technikgesetz ist

Opens YouTube 0:49

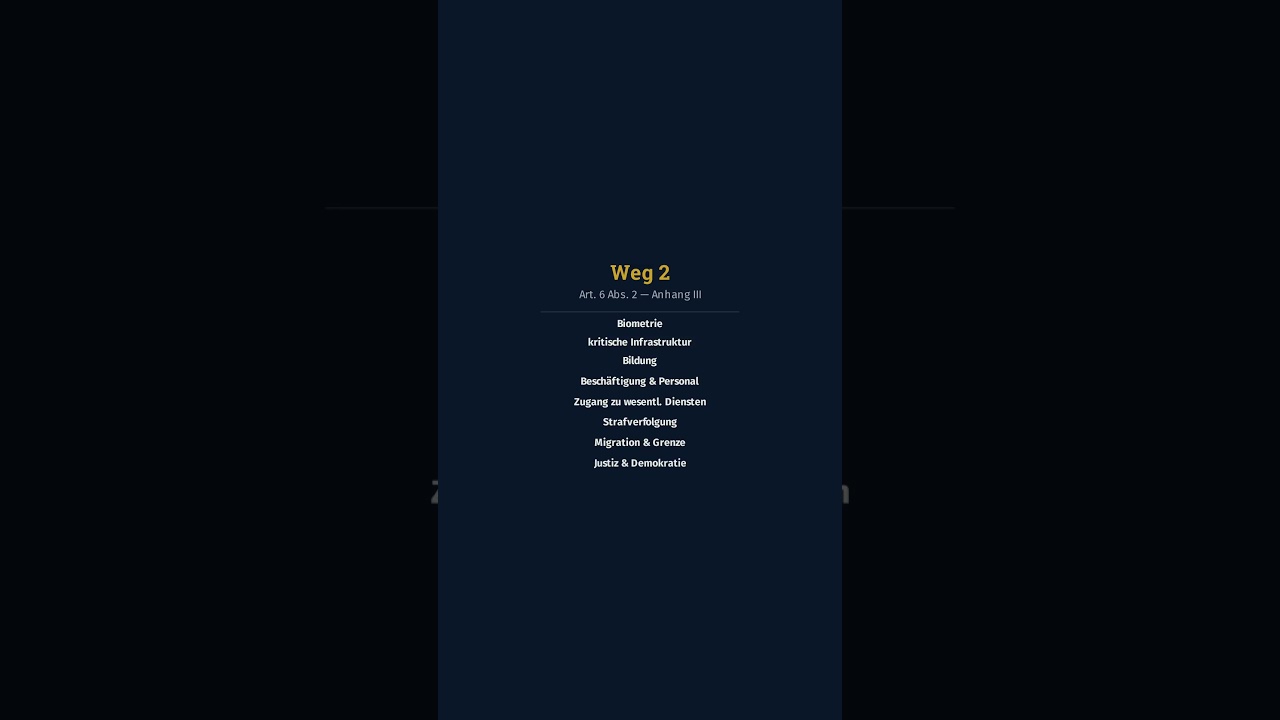

0:49Hochrisiko-System nach AI Act: Was Art. 6 Abs. 1 und 2 wirklich sagt

Opens YouTube 0:46

0:46Copilot im Einsatz: Wer trägt die AI-Act-Pflicht?

Opens YouTube 0:47

0:47Verbot oder Hochrisiko: Was den Unterschied ausmacht (Art. 5/6 AI Act)

Opens YouTube 1:00

1:00Wir entwickeln keine KI — gilt der AI Act trotzdem? (Art. 3)

Opens YouTube 1:00

1:00Nur intern, nicht zum Verkauf: Fällt der HR-Bot unter den AI Act?

Opens YouTube 0:53

0:53KI im Einsatz: Warum Governance Chefsache ist (AI Act)

Opens YouTube 1:00

1:00ChatGPT-Bot im Vertrieb: Wann gilt das schon als KI-System? (Art. 3)

Opens YouTube 1:00

1:00Wer haftet beim KI-Einsatz im Unternehmen? (Art. 26 AI Act)

Opens YouTube 0:44

0:44ChatGPT, Copilot, HR-Bot: Welche AI-Act-Stufe gilt? (Art. 5/6/50)

Opens YouTube 1:00

1:00Anhang III AI Act: Ist mein KI-System Hochrisiko? (Art. 6)

Opens YouTube